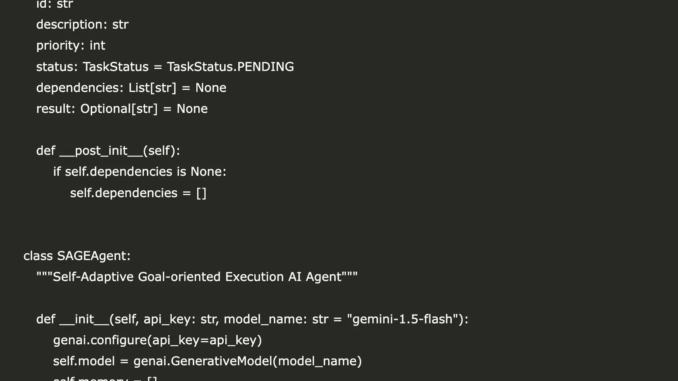

class Task:

id: str

description: str

priority: int

status: TaskStatus = TaskStatus.PENDING

dependencies: List[str] = None

result: Optional[str] = None

def __post_init__(self):

if self.dependencies is None:

self.dependencies = []

class SAGEAgent:

“””Self-Adaptive Goal-oriented Execution AI Agent”””

def __init__(self, api_key: str, model_name: str = “gemini-1.5-flash”):

genai.configure(api_key=api_key)

self.model = genai.GenerativeModel(model_name)

self.memory = []

self.tasks = {}

self.context = {}

self.iteration_count = 0

def self_assess(self, goal: str, context: Dict[str, Any]) -> Dict[str, Any]:

“””S: Self-Assessment – Evaluate current state and capabilities”””

assessment_prompt = f”””

You are an AI agent conducting self-assessment. Respond ONLY with valid JSON, no additional text.

GOAL: {goal}

CONTEXT: {json.dumps(context, indent=2)}

TASKS_PROCESSED: {len(self.tasks)}

Provide assessment as JSON with these exact keys:

{{

“progress_score”: <number 0-100>,

“resources”: [“list of available resources”],

“gaps”: [“list of knowledge gaps”],

“risks”: [“list of potential risks”],

“recommendations”: [“list of next steps”]

}}

“””

response = self.model.generate_content(assessment_prompt)

try:

text = response.text.strip()

if text.startswith(‘“`’):

text = text.split(‘“`’)[1]

if text.startswith(‘json’):

text = text[4:]

text = text.strip()

return json.loads(text)

except Exception as e:

print(f”Assessment parsing error: {e}”)

return {

“progress_score”: 25,

“resources”: [“AI capabilities”, “Internet knowledge”],

“gaps”: [“Specific domain expertise”, “Real-time data”],

“risks”: [“Information accuracy”, “Scope complexity”],

“recommendations”: [“Break down into smaller tasks”, “Focus on research first”]

}

def adaptive_plan(self, goal: str, assessment: Dict[str, Any]) -> List[Task]:

“””A: Adaptive Planning – Create dynamic, context-aware task decomposition”””

planning_prompt = f”””

You are an AI task planner. Respond ONLY with valid JSON array, no additional text.

MAIN_GOAL: {goal}

ASSESSMENT: {json.dumps(assessment, indent=2)}

Create 3-4 actionable tasks as JSON array:

[

{{

“id”: “task_1”,

“description”: “Clear, specific task description”,

“priority”: 5,

“dependencies”: []

}},

{{

“id”: “task_2”,

“description”: “Another specific task”,

“priority”: 4,

“dependencies”: [“task_1”]

}}

]

Each task must have: id (string), description (string), priority (1-5), dependencies (array of strings)

“””

response = self.model.generate_content(planning_prompt)

try:

text = response.text.strip()

if text.startswith(‘“`’):

text = text.split(‘“`’)[1]

if text.startswith(‘json’):

text = text[4:]

text = text.strip()

task_data = json.loads(text)

tasks = []

for i, task_info in enumerate(task_data):

task = Task(

id=task_info.get(‘id’, f’task_{i+1}’),

description=task_info.get(‘description’, ‘Undefined task’),

priority=task_info.get(‘priority’, 3),

dependencies=task_info.get(‘dependencies’, [])

)

tasks.append(task)

return tasks

except Exception as e:

print(f”Planning parsing error: {e}”)

return [

Task(id=”research_1″, description=”Research sustainable urban gardening basics”, priority=5),

Task(id=”research_2″, description=”Identify space-efficient growing methods”, priority=4),

Task(id=”compile_1″, description=”Organize findings into structured guide”, priority=3, dependencies=[“research_1”, “research_2”])

]

def execute_goal_oriented(self, task: Task) -> str:

“””G: Goal-oriented Execution – Execute specific task with focused attention”””

execution_prompt = f”””

GOAL-ORIENTED EXECUTION:

Task: {task.description}

Priority: {task.priority}

Context: {json.dumps(self.context, indent=2)}

Execute this task step-by-step:

1. Break down the task into concrete actions

2. Execute each action methodically

3. Validate results at each step

4. Provide comprehensive output

Focus on practical, actionable results. Be specific and thorough.

“””

response = self.model.generate_content(execution_prompt)

return response.text.strip()

def integrate_experience(self, task: Task, result: str, success: bool) -> Dict[str, Any]:

“””E: Experience Integration – Learn from outcomes and update knowledge”””

integration_prompt = f”””

You are learning from task execution. Respond ONLY with valid JSON, no additional text.

TASK: {task.description}

RESULT: {result[:200]}…

SUCCESS: {success}

Provide learning insights as JSON:

{{

“learnings”: [“key insight 1”, “key insight 2”],

“patterns”: [“pattern observed 1”, “pattern observed 2”],

“adjustments”: [“adjustment for future 1”, “adjustment for future 2”],

“confidence_boost”: <number -10 to 10>

}}

“””

response = self.model.generate_content(integration_prompt)

try:

text = response.text.strip()

if text.startswith(‘“`’):

text = text.split(‘“`’)[1]

if text.startswith(‘json’):

text = text[4:]

text = text.strip()

experience = json.loads(text)

experience[‘task_id’] = task.id

experience[‘timestamp’] = time.time()

self.memory.append(experience)

return experience

except Exception as e:

print(f”Experience parsing error: {e}”)

experience = {

“learnings”: [f”Completed task: {task.description}”],

“patterns”: [“Task execution follows planned approach”],

“adjustments”: [“Continue systematic approach”],

“confidence_boost”: 5 if success else -2,

“task_id”: task.id,

“timestamp”: time.time()

}

self.memory.append(experience)

return experience

def execute_sage_cycle(self, goal: str, max_iterations: int = 3) -> Dict[str, Any]:

“””Execute complete SAGE cycle for goal achievement”””

print(f”🎯 Starting SAGE cycle for goal: {goal}”)

results = {“goal”: goal, “iterations”: [], “final_status”: “unknown”}

for iteration in range(max_iterations):

self.iteration_count += 1

print(f”\n🔄 SAGE Iteration {iteration + 1}”)

print(“📊 Self-Assessment…”)

assessment = self.self_assess(goal, self.context)

print(f”Progress Score: {assessment.get(‘progress_score’, 0)}/100″)

print(“🗺️ Adaptive Planning…”)

tasks = self.adaptive_plan(goal, assessment)

print(f”Generated {len(tasks)} tasks”)

print(“⚡ Goal-oriented Execution…”)

iteration_results = []

for task in sorted(tasks, key=lambda x: x.priority, reverse=True):

if self._dependencies_met(task):

print(f” Executing: {task.description}”)

task.status = TaskStatus.IN_PROGRESS

try:

result = self.execute_goal_oriented(task)

task.result = result

task.status = TaskStatus.COMPLETED

success = True

print(f” ✅ Completed: {task.id}”)

except Exception as e:

task.status = TaskStatus.FAILED

task.result = f”Error: {str(e)}”

success = False

print(f” ❌ Failed: {task.id}”)

experience = self.integrate_experience(task, task.result, success)

self.tasks[task.id] = task

iteration_results.append({

“task”: asdict(task),

“experience”: experience

})

self._update_context(iteration_results)

results[“iterations”].append({

“iteration”: iteration + 1,

“assessment”: assessment,

“tasks_generated”: len(tasks),

“tasks_completed”: len([r for r in iteration_results if r[“task”][“status”] == “completed”]),

“results”: iteration_results

})

if assessment.get(‘progress_score’, 0) >= 90:

results[“final_status”] = “achieved”

print(“🎉 Goal achieved!”)

break

if results[“final_status”] == “unknown”:

results[“final_status”] = “in_progress”

return results

def _dependencies_met(self, task: Task) -> bool:

“””Check if task dependencies are satisfied”””

for dep_id in task.dependencies:

if dep_id not in self.tasks or self.tasks[dep_id].status != TaskStatus.COMPLETED:

return False

return True

def _update_context(self, results: List[Dict[str, Any]]):

“””Update agent context based on execution results”””

completed_tasks = [r for r in results if r[“task”][“status”] == “completed”]

self.context.update({

“completed_tasks”: len(completed_tasks),

“total_tasks”: len(self.tasks),

“success_rate”: len(completed_tasks) / len(results) if results else 0,

“last_update”: time.time()

})