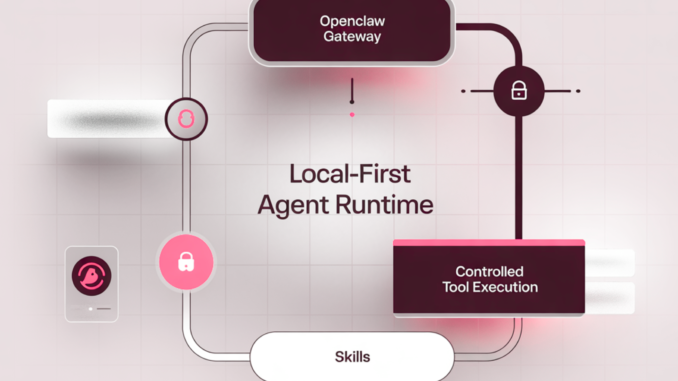

In this tutorial, we build and operate a fully local, schema-valid OpenClaw runtime. We configure the OpenClaw gateway with strict loopback binding, set up authenticated model access through environment variables, and define a secure execution environment using the built-in exec tool. We then create a structured custom skill that the OpenClaw agent can discover and invoke deterministically. Instead of manually running Python scripts, we allow OpenClaw to orchestrate model reasoning, skill selection, and controlled tool execution through its agent runtime. Throughout the process, we focus on OpenClaw’s architecture, gateway control plane, agent defaults, model routing, and skill abstraction to understand how OpenClaw coordinates autonomous behavior in a secure, local-first setup.

from getpass import getpass

def sh(cmd, check=True, capture=False, env=None):

p = subprocess.run(

[“bash”, “-lc”, cmd],

check=check,

text=True,

capture_output=capture,

env=env or os.environ.copy(),

)

return p.stdout if capture else None

def require_secret_env(var=”OPENAI_API_KEY”):

if os.environ.get(var, “”).strip():

return

key = getpass(f”Enter {var} (hidden): “).strip()

if not key:

raise RuntimeError(f”{var} is required.”)

os.environ[var] = key

def install_node_22_and_openclaw():

sh(“sudo apt-get update -y”)

sh(“sudo apt-get install -y ca-certificates curl gnupg”)

sh(“curl -fsSL https://deb.nodesource.com/setup_22.x | sudo -E bash -“)

sh(“sudo apt-get install -y nodejs”)

sh(“node -v && npm -v”)

sh(“npm install -g openclaw@latest”)

sh(“openclaw –version”, check=False)

We define the core utility functions that allow us to execute shell commands, securely capture environment variables, and install OpenClaw with the required Node.js runtime. We establish the foundational control interface that connects Python execution with the OpenClaw CLI. Here, we prepare the environment so that OpenClaw can function as the central agent runtime inside Colab.

home = pathlib.Path.home()

base = home / “.openclaw”

workspace = base / “workspace”

(workspace / “skills”).mkdir(parents=True, exist_ok=True)

cfg = {

“gateway”: {

“mode”: “local”,

“port”: 18789,

“bind”: “loopback”,

“auth”: {“mode”: “none”},

“controlUi”: {

“enabled”: True,

“basePath”: “/openclaw”,

“dangerouslyDisableDeviceAuth”: True

}

},

“agents”: {

“defaults”: {

“workspace”: str(workspace),

“model”: {“primary”: “openai/gpt-4o-mini”}

}

},

“tools”: {

“exec”: {

“backgroundMs”: 10000,

“timeoutSec”: 1800,

“cleanupMs”: 1800000,

“notifyOnExit”: True,

“notifyOnExitEmptySuccess”: False,

“applyPatch”: {“enabled”: False, “allowModels”: [“openai/gpt-5.2”]}

}

}

}

base.mkdir(parents=True, exist_ok=True)

(base / “openclaw.json”).write_text(json.dumps(cfg, indent=2))

return str(base / “openclaw.json”)

def start_gateway_background():

sh(“rm -f /tmp/openclaw_gateway.log /tmp/openclaw_gateway.pid”, check=False)

sh(“nohup openclaw gateway –port 18789 –bind loopback –verbose > /tmp/openclaw_gateway.log 2>&1 & echo $! > /tmp/openclaw_gateway.pid”)

for _ in range(60):

time.sleep(1)

log = sh(“tail -n 120 /tmp/openclaw_gateway.log || true”, capture=True, check=False) or “”

if re.search(r”(listening|ready|ws|http).*18789|18789.*listening”, log, re.IGNORECASE):

return True

print(“Gateway log tail:\n”, sh(“tail -n 220 /tmp/openclaw_gateway.log || true”, capture=True, check=False))

raise RuntimeError(“OpenClaw gateway did not start cleanly.”)

We write a schema-valid OpenClaw configuration file and initialize the local gateway settings. We define the workspace, model routing, and execution tool behavior in accordance with the official OpenClaw configuration structure. We then start the OpenClaw gateway in loopback mode to ensure the agent runtime launches correctly and securely.

out = sh(“openclaw models list –json”, capture=True, check=False) or “”

refs = []

try:

data = json.loads(out)

if isinstance(data, dict):

for k in [“models”, “items”, “list”]:

if isinstance(data.get(k), list):

data = data[k]

break

if isinstance(data, list):

for it in data:

if isinstance(it, str) and “/” in it:

refs.append(it)

elif isinstance(it, dict):

for key in [“ref”, “id”, “model”, “name”]:

v = it.get(key)

if isinstance(v, str) and “/” in v:

refs.append(v)

break

except Exception:

pass

refs = [r for r in refs if r.startswith(“openai/”)]

preferred = [“openai/gpt-4o-mini”, “openai/gpt-4.1-mini”, “openai/gpt-4o”, “openai/gpt-5.2-mini”, “openai/gpt-5.2”]

for p in preferred:

if p in refs:

return p

return refs[0] if refs else “openai/gpt-4o-mini”

def set_default_model(model_ref):

sh(f’openclaw config set agents.defaults.model.primary “{model_ref}”‘, check=False)

We dynamically query OpenClaw for available models and select an appropriate OpenAI provider model. We programmatically configure the agent defaults so that OpenClaw routes all reasoning requests through the chosen model. Here, we allow OpenClaw to handle model abstraction and provider authentication seamlessly.

home = pathlib.Path.home()

skill_dir = home / “.openclaw” / “workspace” / “skills” / “colab_rag_lab”

skill_dir.mkdir(parents=True, exist_ok=True)

tool_py = skill_dir / “rag_tool.py”

tool_py.write_text(textwrap.dedent(r”””

import sys, re, subprocess

def pip(*args): subprocess.check_call([sys.executable, “-m”, “pip”, “-q”, “install”, *args])

q = ” “.join(sys.argv[1:]).strip()

if not q:

print(“Usage: python3 rag_tool.py <question>”, file=sys.stderr)

raise SystemExit(2)

try:

import numpy as np

except Exception:

pip(“numpy”); import numpy as np

try:

import faiss

except Exception:

pip(“faiss-cpu”); import faiss

try:

from sentence_transformers import SentenceTransformer

except Exception:

pip(“sentence-transformers”); from sentence_transformers import SentenceTransformer

CORPUS = [

(“OpenClaw basics”, “OpenClaw runs an agent runtime behind a local gateway and can execute tools and skills in a controlled way.”),

(“Strict config schema”, “OpenClaw gateway refuses to start if openclaw.json has unknown keys; use openclaw doctor to diagnose issues.”),

(“Exec tool config”, “tools.exec config sets timeouts and behavior; it does not use an enabled flag in the config schema.”),

(“Gateway auth”, “Even on localhost, gateway auth exists; auth.mode can be none for trusted loopback-only setups.”),

(“Skills”, “Skills define repeatable tool-use patterns; agents can select a skill and then call exec with a fixed command template.”)

]

docs = []

for title, body in CORPUS:

sents = re.split(r'(?<=[.!?])\s+’, body.strip())

for i, s in enumerate(sents):

s = s.strip()

if s:

docs.append((f”{title}#{i+1}”, s))

model = SentenceTransformer(“all-MiniLM-L6-v2”)

emb = model.encode([d[1] for d in docs], normalize_embeddings=True).astype(“float32”)

index = faiss.IndexFlatIP(emb.shape[1])

index.add(emb)

q_emb = model.encode([q], normalize_embeddings=True).astype(“float32”)

D, I = index.search(q_emb, 4)

hits = []

for score, idx in zip(D[0].tolist(), I[0].tolist()):

if idx >= 0:

ref, txt = docs[idx]

hits.append((score, ref, txt))

print(“Answer (grounded to retrieved snippets):\n”)

print(“Question:”, q, “\n”)

print(“Key points:”)

for score, ref, txt in hits:

print(f”- ({score:.3f}) {txt} [{ref}]”)

print(“\nCitations:”)

for _, ref, _ in hits:

print(f”- {ref}”)

“””).strip() + “\n”)

sh(f”chmod +x {shlex.quote(str(tool_py))}”)

skill_md = skill_dir / “SKILL.md”

skill_md.write_text(textwrap.dedent(f”””

—

name: colab_rag_lab

description: Deterministic local RAG invoked via a fixed exec command.

—

# Colab RAG Lab

## Tooling rule (strict)

Always run exactly:

`python3 {tool_py} “<QUESTION>”`

## Output rule

Return the tool output verbatim.

“””).strip() + “\n”)

We construct a custom OpenClaw skill inside the designated workspace directory. We define a deterministic execution pattern in SKILL.md and pair it with a structured RAG tool script that the agent can invoke. We rely on OpenClaw’s skill-loading mechanism to automatically register and operationalize this tool within the agent runtime.

sh(‘openclaw agent –message “refresh skills” –thinking low’, check=False)

def run_openclaw_agent_demo():

prompt = (

‘Use the skill `colab_rag_lab` to answer: ‘

‘Why did my gateway refuse to start when I used agents.defaults.thinking and tools.exec.enabled, ‘

‘and what are the correct config knobs instead?’

)

out = sh(f’openclaw agent –message {shlex.quote(prompt)} –thinking high’, capture=True, check=False)

print(out)

require_secret_env(“OPENAI_API_KEY”)

install_node_22_and_openclaw()

cfg_path = write_openclaw_config_valid()

print(“Wrote schema-valid config:”, cfg_path)

print(“\n— openclaw doctor —\n”)

print(sh(“openclaw doctor”, capture=True, check=False))

start_gateway_background()

model = pick_model_from_openclaw()

set_default_model(model)

print(“Selected model:”, model)

create_custom_skill_rag()

refresh_skills()

print(“\n— OpenClaw agent run (skill-driven) —\n”)

run_openclaw_agent_demo()

print(“\n— Gateway log tail —\n”)

print(sh(“tail -n 180 /tmp/openclaw_gateway.log || true”, capture=True, check=False))

We refresh the OpenClaw skill registry and invoke the OpenClaw agent with a structured instruction. We allow OpenClaw to perform reasoning, select the skill, execute the exec tool, and return the grounded output. Here, we demonstrate the complete OpenClaw orchestration cycle, from configuration to autonomous-agent execution.

In conclusion, we deployed and operated an advanced OpenClaw workflow in a controlled Colab environment. We validated the configuration schema, started the gateway, dynamically selected a model provider, registered a skill, and executed it through the OpenClaw agent interface. Rather than treating OpenClaw as a wrapper, we used it as the central orchestration layer that manages authentication, skill loading, tool execution, and runtime governance. We demonstrated how OpenClaw enforces structured execution while enabling autonomous reasoning, showing how it can serve as a robust foundation for building secure, extensible agent systems in production-oriented environments.

Check out the Full Codes here. Also, feel free to follow us on Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Need to partner with us for promoting your GitHub Repo OR Hugging Face Page OR Product Release OR Webinar etc.? Connect with us